AI Assisted Thematic Analysis: 2026 Qualitative Data Guide

Find the best AI tools for thematic analysis in 2026. Get expert tips on using GenAI to identify key themes in research data and automate your coding process.

When I talk with founders and product teams about AI thematic analysis, the first thing I do is explain what it is in plain language. At its core, thematic analysis is a way of making sense of qualitative data. Traditionally, researchers read transcripts or notes, code them manually and then identify recurring patterns. AI‑powered thematic analysis uses machine learning and natural language processing to help with these steps. It can group similar phrases, spot hidden themes and even tag sentiment across thousands of survey responses or user interviews. Tools such as ChatGPT, NVivo, ATLAS.ti and Looppanel automate parts of the process, but none of them eliminate the need for human judgement. They are research assistants, not replacements.

Why does this matter to early‑stage startups? Product teams are bombarded with feedback – from customer support, beta users and internal stakeholders. Manually combing through a mountain of text slows down decision‑making. AI‑driven thematic analysis speeds up the discovery of patterns so founders can prioritise features, fix pain points and communicate insights succinctly. It makes qualitative research more accessible to non‑experts and frees designers and PMs to focus on problem framing and experimentation. As Nielsen Norman Group (NN/g) notes, AI can speed up research tasks, especially in planning and analysis, but it still needs oversight. Understanding how to wield these tools wisely lets small teams make evidence‑based decisions without drowning in data.

The building blocks

AI‑assisted thematic analysis draws from several disciplines:

- Natural language processing (NLP) – algorithms convert unstructured text into tokens, phrases and vectors. They remove common stopwords and apply stemming so that related words are treated together.

- Machine learning and clustering – unsupervised techniques such as k‑means, hierarchical clustering or latent Dirichlet allocation (LDA) group segments with similar meanings. These clusters form the raw material for themes.

- Sentiment and topic modelling – sentiment analysis classifies text as positive, negative or neutral, while topic models uncover broader subjects across a corpus. These models help researchers see emotional tone alongside content.

- Large language models (LLMs) – systems like ChatGPT can generate summaries, suggest codes and reorganise data based on prompts. They rely on billions of parameters trained on public text and can be customised with domain‑specific knowledge.

The difference between manual coding and AI‑assisted coding lies in scale and starting point. Manual coding provides contextual richness because a researcher reads every line. AI coding provides a first pass; it quickly tags recurring noun phrases or sentiment across thousands of entries and suggests clusters. However, not all auto‑generated codes will be useful, and AI often groups many items into an "other" category. Human reviewers still refine, merge and name themes, much like editing a draft.

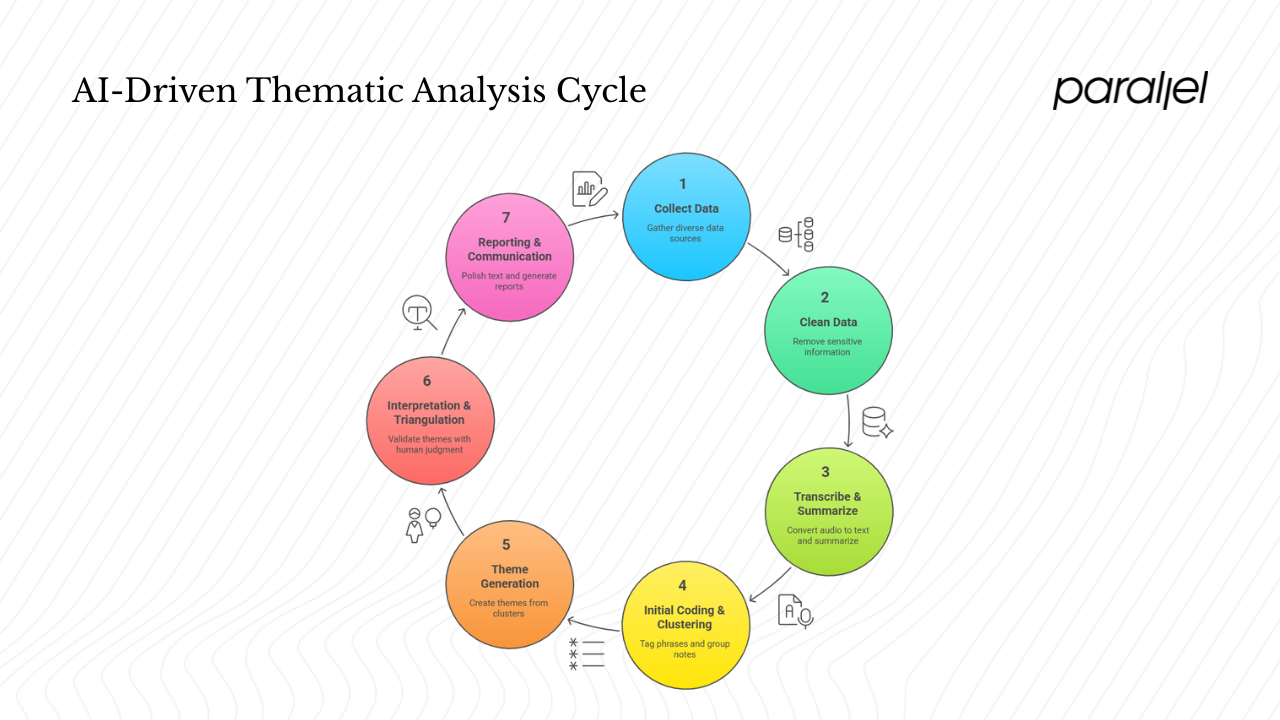

How AI‑driven thematic analysis works

I’ve worked with teams that adopt AI‑powered workflows to sift through customer feedback. The process typically follows these steps:

- Collect and prepare data – Pull transcripts, notes, survey responses or support tickets into one place. Platforms like NVivo and Looppanel allow you to import text, audio, video or spreadsheets. If you’re using ChatGPT or a custom GPT, you’ll need to feed it the documents or manually paste them into prompts.

- Clean and preprocess – Remove personally identifiable information and sanitise the data. NVivo’s AI assistant can scrub names and addresses automatically, though you still need to check for mistakes.

- Transcribe and summarise – For audio or video data, transcription tools convert speech into text and link timestamps. AI summarisation features provide an overview of each session. Nielsen Norman Group warns to double‑check these summaries because AI can misunderstand context.

- Initial coding and clustering – AI features take a first pass, tagging common phrases and grouping notes into clusters. NVivo’s auto‑coding finds recurring noun phrases and forms broad topic areas. Tools like Dovetail or Looppanel cluster highlights from multiple interviews, although many highlights often end up in an "other" bucket. This stage accelerates analysis but is not the final word.

- Theme generation – Researchers review clusters, merge duplicates and create themes. Unsupervised algorithms such as LDA can help identify latent topics. ChatGPT models can reorganise clusters into themes when given examples (few‑shot prompts), but their output varies with the quality of prompts.

- Interpretation and triangulation – Here, human judgement returns to the forefront. Validate AI‑generated themes by comparing them with manual coding or alternative models. The Bennett Institute study demonstrates using topic modelling to validate ChatGPT‑generated codes. This cross‑checking guards against misinterpretation and bias.

- Reporting and communication – AI tools can help polish text, tailor language to specific audiences and avoid jargon. They can generate first drafts of personas or journey maps (based on actual research) and summarise findings. However, researchers must ensure outputs are grounded in real data, not hallucinations.

The role of unsupervised learning

Unsupervised techniques are central to AI thematic analysis because they find patterns without labeled training data. LDA assumes documents are mixtures of topics and topics are distributions over words; it reveals themes by identifying word co‑occurrence patterns. Clustering algorithms like k‑means or hierarchical clustering group similar comments based on vectorised representations. These techniques allow researchers to see emergent themes without imposing a pre‑defined framework. Sentiment analysis and named‑entity recognition further enrich clusters by attaching emotional and contextual tags.

Large language models like ChatGPT bring another layer of flexibility. They can perform classification, summarisation and code suggestion within a conversational interface. However, they are stochastic; they may focus on the wrong aspects or even fabricate information. Prompt engineering techniques—few‑shot examples, chain of thought and role play—help steer outputs. But the quality of results depends on the prompt and domain knowledge provided.

Benefits and limitations for early‑stage teams

Why founders and PMs should care

AI‑assisted thematic analysis offers several advantages when resources are limited and decisions must be fast:

- Speed and scalability – AI can process large datasets in minutes. Tools like HeyMarvin highlight that automated theme generation and sentiment detection make analysts "10× more efficient".

- Consistency – Algorithms apply the same rules across the dataset, which can reduce variation between coders and help standardise insights. NVivo’s auto‑coding uses your initial patterns to suggest child codes, ensuring codes are applied uniformly.

- Accessibility – PMs or designers without formal research training can start with AI to uncover high‑level themes. NN/g notes that AI tools are helpful for desk research and ideation during planning, generating possible research goals, method options or interview questions.

- Time to value – Automated transcription, summarisation and clustering free up time for experimentation and product improvements. Instead of spending weeks coding survey responses, you can act on insights within days.

Real‑world examples abound. In a pilot by the Bennett Institute, researchers customised a GPT model to code UN policy documents. The AI accelerated coding, expanded the empirical base and produced clusters comparable to manual analysis. In my own client work with SaaS startups, we’ve used auto‑clustering to sift through thousands of NPS comments. The tool surfaced recurring frustrations around onboarding and missing integrations that we then validated through follow‑up interviews. It shortened the discovery phase from three weeks to three days and led to an improved onboarding flow that reduced time to value by 30 percent.

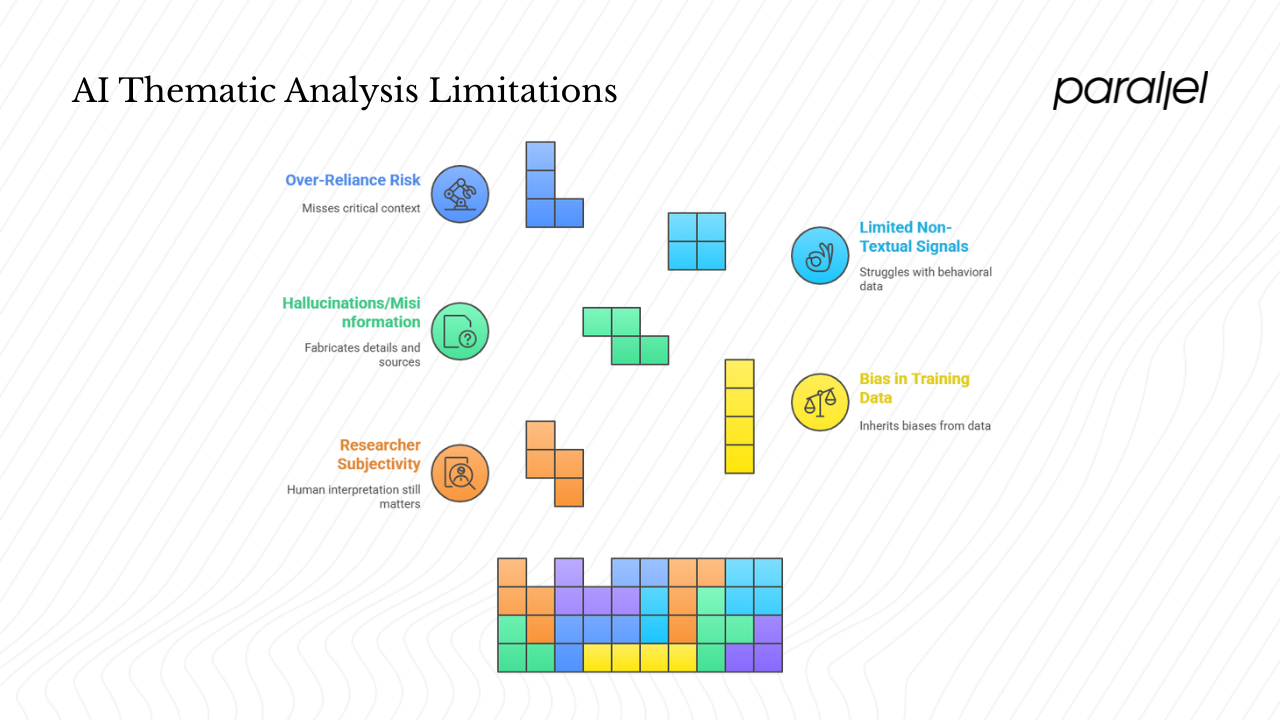

When AI falls short

The promise of AI thematic analysis doesn’t negate its limits. There are several caveats founders should keep in mind:

- Researcher subjectivity – Human interpretation still matters. Thematic analysis is interpretive; researchers must decide how codes are grouped into themes. Tools like ChatGPT may assign codes, but someone must judge whether the clusters make sense in context.

- Bias in training data – AI models inherit biases from the data they were trained on. A model trained predominantly on Western English sources may misinterpret vernacular or cultural nuance. The Bennett Institute paper stresses that the reliability of AI‑supported analysis is under scrutiny and cross‑referencing with manual analysis is essential.

- Hallucinations and misinformation – Generative models sometimes fabricate details. NN/g warns that AI systems can return inaccurate information or made‑up sources during desk research. The article advises always asking AI systems to cite primary sources and double‑checking those sources.

- Limited understanding of non‑textual signals – AI struggles with behavioural data. NN/g points out that AI cannot observe usability testing or interpret nonverbal cues. Tools may summarise transcripts but miss what users did or felt in the session.

- Over‑reliance risk – AI coding should be treated as an initial pass, not the final analysis. NN/g emphasises that a human must translate coded data into insights. Over‑reliance on AI may cause you to miss critical context such as a participant being embarrassed to tell the truth.

In practice, the best results come from blending AI and human expertise. Let AI handle rote tasks like transcription, initial coding and clustering. Then bring in experienced researchers or product people to interpret the themes, ask deeper questions and cross‑validate with other data sources.

Manual vs AI‑assisted analysis

| Aspect | Manual Thematic Analysis | AI-Assisted Analysis |

|---|---|---|

| Strengths | Interprets tone, emotion, sarcasm, and subtle meaning. Connects fragmented ideas into coherent insights. Provides depth and texture to findings. | Handles large volumes of data quickly. Identifies high-frequency themes and sentiment trends. Efficient for the initial review or pattern detection. |

| Weaknesses | Time-consuming and labour-intensive. Vulnerable to researcher bias and inconsistency between coders. | Misses emotional undertones, sarcasm, or cultural references. May misclassify nuanced statements or flatten unique responses into generic clusters. |

| Ideal Use Cases | Understanding complex motivations, emotions, and contextual factors. Investigating small, detailed datasets or specific issues like customer trust or churn. | Processing large datasets such as survey responses, social media feedback, or product reviews. Generating an overview before deeper manual interpretation. |

| Human Role | Central—researchers interpret meaning and decide how data fits into broader narratives. | Supervisory—humans define rules, validate results, and refine algorithmic output to ensure accuracy. |

| Speed and Scale | Slow, limited by human capacity. | Fast, scalable across thousands of data points. |

| Accuracy and Bias | High contextual accuracy but subjective bias risk. | Consistent in structure but lacks interpretive depth; prone to systematic errors. |

| Best Practice | Use for deep dives and when context or emotion matters most. | Use for first-pass analysis or as a triangulation tool to cross-check insights. Combine with manual review for balance. |

| Analogy | A seasoned ethnographer who reads between the lines. | An eager intern—quick, capable, but needing direction, supervision, and correction. |

Practical applications and tools for startups

Where AI thematic analysis adds value

Early‑stage teams juggle multiple streams of qualitative data. Here’s where AI‑assisted thematic analysis can be especially useful:

- Product discovery and user research – During discovery, AI can help synthesise interviews or survey responses to highlight recurring pain points. It can suggest interview questions and brainstorm screening criteria. However, have a human review the final list and adjust the order or wording.

- Customer feedback analysis – Tools such as NVivo’s AI assistant quickly identify recurring noun phrases and group comments into broad topic areas. HeyMarvin’s software can identify patterns and sentiments across open‑ended survey responses, chat logs or reviews. This helps PMs understand what customers love and where they struggle.

- Market insight – Large‑scale text analysis across social media, forums or public reviews can reveal emerging trends. Topic modelling identifies themes in competitor reviews, giving founders early signals about market gaps.

- Team decision‑making – AI summarisation and clustering make it easier to present findings to stakeholders. NVivo can summarise documents and generate charts; AI chatbots in tools like Notion can search research repositories and answer stakeholders’ questions. This helps ensure decisions are grounded in evidence rather than anecdotes.

Tools and platforms

Today’s ecosystem offers a spectrum of solutions, from all‑in‑one platforms to stand‑alone LLMs:

- NVivo 15 with Lumivero AI Assistant – NVivo integrates AI summarisation, flexible coding suggestions and auto‑coding. It identifies recurring noun phrases and groups them into topics; it offers sentiment categorisation and user‑driven machine learning that refines codes based on your input. It can scrub personally identifying information and summarise documents in seconds.

- ATLAS.ti – Another long‑standing qualitative analysis tool with AI features for automated coding and sentiment analysis. It supports large datasets and integrates with other data sources.

- Looppanel and Dovetail – These products automate transcription, highlight extraction and clustering of notes from user interviews. They provide AI‑assisted tagging and theme discovery. Dovetail clusters highlight but often place many notes in an "other" category, which requires human reorganisation.

- HeyMarvin – A newer entrant focused on AI‑driven qualitative analysis. It offers automatic theme identification, sentiment analysis, elaboration and visualisation, claiming to make analysts "ten times more efficient".

- ChatGPT and custom GPTs – LLMs can be tailored to your domain using uploaded knowledge bases or examples. They are flexible assistants for coding, clustering and summarising text. Bennett Institute’s pilot created a custom GPT for initial coding and used topic modelling to validate results. Prompt design is crucial; high‑quality examples and clear instructions improve output.

Picking the right tool depends on your dataset, research workflow and budget. Tools like NVivo excel at mixed methods research and collaboration. Lighter tools like Looppanel or Dovetail suit lean teams that need quick transcription and clustering. ChatGPT or custom GPTs offer flexibility and low cost but require careful prompts and human validation.

Lessons from research and practice

What have we learned from recent experiments and client work?

- Validate AI outputs – The Bennett Institute study compared ChatGPT‑generated codes with topic modelling and manual analysis. It found that AI can standardise coding but needs cross‑checking to ensure accuracy. In our projects, we always triangulate AI results with manual checks and alternative algorithms.

- Design your prompts – Quality prompts matter more than model choice. Few‑shot examples and explicit instructions lead to more meaningful outputs. When we provide ChatGPT with sample codes and ask it to group similar statements, the clusters are much closer to our expectations.

- Provide context and templates – NN/g advises giving AI systems templates and clear study details when asking for documentation or consent forms. This reduces mistakes and ensures relevant outputs.

- Treat AI as an intern – As the NN/g article concludes, AI tools have many limitations and require human oversight. They are useful interns who need instructions, context and constraints to perform. Always double‑check their work and add human insights.

Looking ahead and concluding thoughts

The future of AI thematic analysis is promising. Advances in unsupervised learning and semantic extraction will improve theme identification and reduce over‑reliance on frequency‑based clustering. Custom LLMs tailored to specific domains will become more accessible. Tools like NVivo and Looppanel are already embedding generative AI features into their platforms. We will likely see hybrid workflows where AI suggests initial codes, analysts refine them, and domain experts interpret results. This hybrid model preserves the richness of qualitative research while improving efficiency.

For founders and product leaders, the takeaway is simple: AI thematic analysis is a powerful aid, not a replacement. Use it to handle the heavy lifting of coding and clustering, but keep researchers close to the data. Invest time in prompt design and validation. Recognise that AI doesn’t understand context or human nuance—yet. With responsible use, AI can help you move faster without sacrificing depth or empathy.

FAQ

Q1. Can you use AI for thematic analysis?

Yes. AI can assist with coding, clustering and theme identification. The Bennett Institute showed that a custom GPT can speed up initial coding, broaden the empirical base and produce consistent clusters. However, AI should be seen as an assistant. Human researchers must interpret themes, validate outputs and provide context, as emphasised by NN/g and the Qualitative Report’s guidance to use AI as a complement rather than a replacement.

Q2. Can ChatGPT do a thematic analysis?

ChatGPT can perform the initial coding and clustering for thematic analysis when prompted appropriately. The Bennett Institute pilot customised ChatGPT to code UN policy documents and found that it saved time and produced useful clusters. Yet the reliability of its analysis depends on prompt quality and requires human validation. Treat ChatGPT’s output as a starting point and refine it with manual insight.

Q3. What is the best AI for qualitative analysis?

There is no single best tool; it depends on your needs. NVivo 15 with its AI assistant offers advanced autocoding, sentiment analysis and document summarisation. ATLAS.ti provides similar features. Looppanel and Dovetail emphasise transcription and clustering of interview highlights. HeyMarvin focuses on theme identification, sentiment and visualisation. ChatGPT or custom GPTs give flexible, low‑cost coding when customised with prompts. Choose based on dataset size, workflow and budget.

Q4. Does NVivo have AI?

Yes. NVivo 15 integrates the Lumivero AI assistant. It offers automatic text summarisation, document summarisation, flexible coding suggestions and AI‑powered autocoding for themes. The AI can summarise any document in seconds, identify recurring noun phrases, group them into broad topic areas and provide sentiment categorisation. These features speed up initial analysis, but researchers must review and refine the results to ensure accuracy.

.avif)

.webp)