AI in Design: The Complete 2026 Guide

Learn how AI is used in design in 2026. This guide covers tools, workflows, use cases, and how designers can work faster and smarter.

AI in design has moved from novelty to infrastructure. In 2026, product teams that still treat it as a bolt-on experiment are losing ground to those who've rebuilt their workflows around it. This guide covers what the shift actually looks like inside fast-moving SaaS and AI startups — from tool selection and sprint integration to the real tradeoffs founders and PMs need to understand before committing budget and headcount to an AI-assisted design stack.

TL;DR

- AI is reshaping every stage of the design process — research, ideation, prototyping, and handoff.

- The best AI design tools in 2026 reduce repetitive work without replacing strategic judgment.

- Adoption fails most often due to poor integration, not poor tooling.

- Human-centred design remains the non-negotiable foundation. AI accelerates it; it doesn't replace it.

How Is AI Changing the Design Process in 2026?

The most accurate way to describe what's happened: AI has compressed the distance between thinking and making. That changes everything about how a design team operates.

Traditionally, the design process moved through discrete phases — research, synthesis, wireframing, prototyping, testing — with significant time lost between each handoff. AI has collapsed several of those gaps. Research synthesis that once took days of affinity mapping now takes hours with tools running AI qualitative data analysis. Wireframes that required dedicated sessions can now emerge from a natural language prompt in Figma AI or Framer AI.

What this produces is not a shorter design process — it's a faster iteration cycle. Teams can run more experiments per sprint, test more concepts before committing, and respond to user feedback without waiting weeks for a revised prototype.

The more substantive change is in how design thinking scales. Design thinking has always been labour-intensive precisely because empathy and synthesis can't be rushed. AI doesn't shortcut empathy — a machine cannot feel what a frustrated user feels — but it dramatically accelerates pattern recognition across qualitative data. That means designers can spend more time on interpretive, strategic work where human judgment is irreplaceable.

Generative UI is a concrete example. Rather than designing states manually, a designer can prompt a system to generate variants, then apply judgment to select, refine, and validate. The cognitive work shifts from execution to curation and critique — arguably more aligned with how good designers think anyway.

Machine learning design systems are also maturing. Systems like Adobe Sensei now surface inconsistencies across components proactively, flagging accessibility compliance issues against W3C guidelines before they reach engineering. Design tokens are becoming dynamic rather than static — AI can suggest token adjustments based on usage patterns across a product.

The shift is not "AI replacing designers." It's designers who use AI well outproducing those who don't — faster, at higher quality, with less friction at handoff.

For founders and PMs, the practical implication is this: the bottleneck in your design process is no longer tool capability. It's how deliberately you've integrated AI into your team's actual workflow.

Best AI Design Tools for Product Teams at Startups in 2026

Choosing tools is less about features and more about fit — your team's maturity, your stack, and whether the tool reduces cognitive load or adds it.

For most early-stage AI and SaaS startups, the practical stack is Figma AI for product design work, Midjourney or DALL-E for visual exploration, and a purpose-built research tool for synthesis. Framer AI becomes relevant the moment you need to ship a live front-end quickly from a design file.

AI-powered prototyping tools have matured enough that rapid prototyping no longer requires a full engineering sprint to validate an interaction concept. That's a genuine capability shift, not marketing.

The mistake most founders make is adopting too many tools at once. Pick one entry point — usually Figma AI if your team is already in Figma — embed it into daily practice, and expand from there. Tooling sprawl is one of the documented ways digital transformation projects fail.

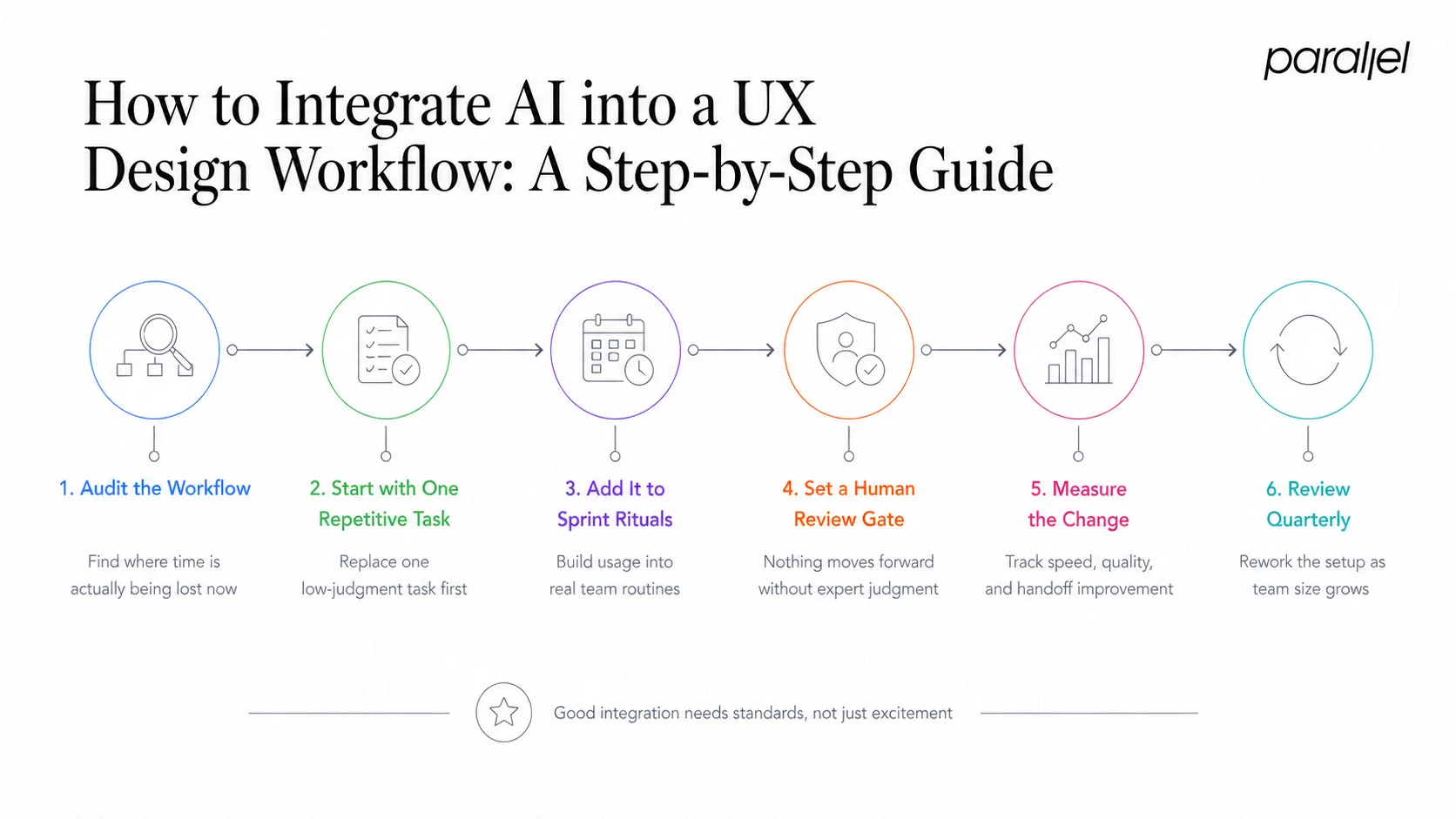

How to Integrate AI into a UX Design Workflow: A Step-by-Step Guide

Integration is where most adoption efforts break down. Teams buy the tool, run a demo, then watch adoption fade within six weeks. Here's what actually works.

- Audit your current workflow: Map where time is lost today — research synthesis, asset creation, annotation, handoff prep. These are your highest-ROI integration points.

- Start with one repetitive task: Pick the highest-friction, lowest-judgment task — e.g., generating copy variants or auto-annotating components — and replace it with an AI step. Build the habit before broadening scope.

- Embed AI into sprint rituals: During sprint grooming, explicitly include an AI-assisted ideation block. Use natural language interfaces to generate initial concepts before the team converges.

- Define a human review gate: Every AI-generated output — layout, copy, component — must pass a designer's judgment before it moves downstream. This is not optional. It's where cognitive load theory matters: an unchecked AI output that ships broken UX costs more to fix than the time saved.

- Instrument your UX metrics framework: Measure velocity, error rate, and handoff quality before and after integration. If you can't measure improvement, you can't justify or scale it.

- Upskill toward prompt craft: The limiting factor for most designers isn't the tool — it's their ability to write precise prompts that yield useful outputs. Invest in prompt literacy as a team skill.

- Review and recalibrate quarterly: What works at 3 people won't work at 12. Revisit your AI workflow every quarter with your team, not just your tooling vendor.

Good AI integration follows the same principles as good design system scalability — it requires governance, not just enthusiasm.

AI in Design: Pros, Cons, and What Product Leaders Actually Need to Know

This is the section I see missing from most AI coverage: an honest accounting of the tradeoffs.

Where AI genuinely helps:

- Compresses ideation cycles, allowing more concept exploration in less time

- Surfaces accessibility compliance issues earlier and more consistently

- Scales documentation and handoff quality without adding headcount

- Enables non-designers (founders, PMs) to create credible first drafts for early validation

- Reduces the manual overhead of design operations significantly

Where AI creates real risk:

- Outputs are statistically average — trained on what exists, not on what should exist for your specific user

- Over-reliance flattens creative risk-taking; teams converge on safe, familiar patterns

- Accessibility compliance is flagged but not solved — a tool can surface a contrast issue, it cannot design empathy into the experience

- Neural networks optimise for pattern completion, not for the messy, non-linear insight that comes from deep user research

- Ethical considerations in AI design — bias in training data, consent, representation — are live issues that product teams regularly underestimate

The most dangerous outcome of AI in design is not bad outputs. It's confident-looking outputs that skip the hard thinking.

For product leaders specifically: AI shifts the question from "can we build it?" to "should we build it this way?" The strategic judgment layer — informed by genuine human-centred design practice — becomes more important, not less.

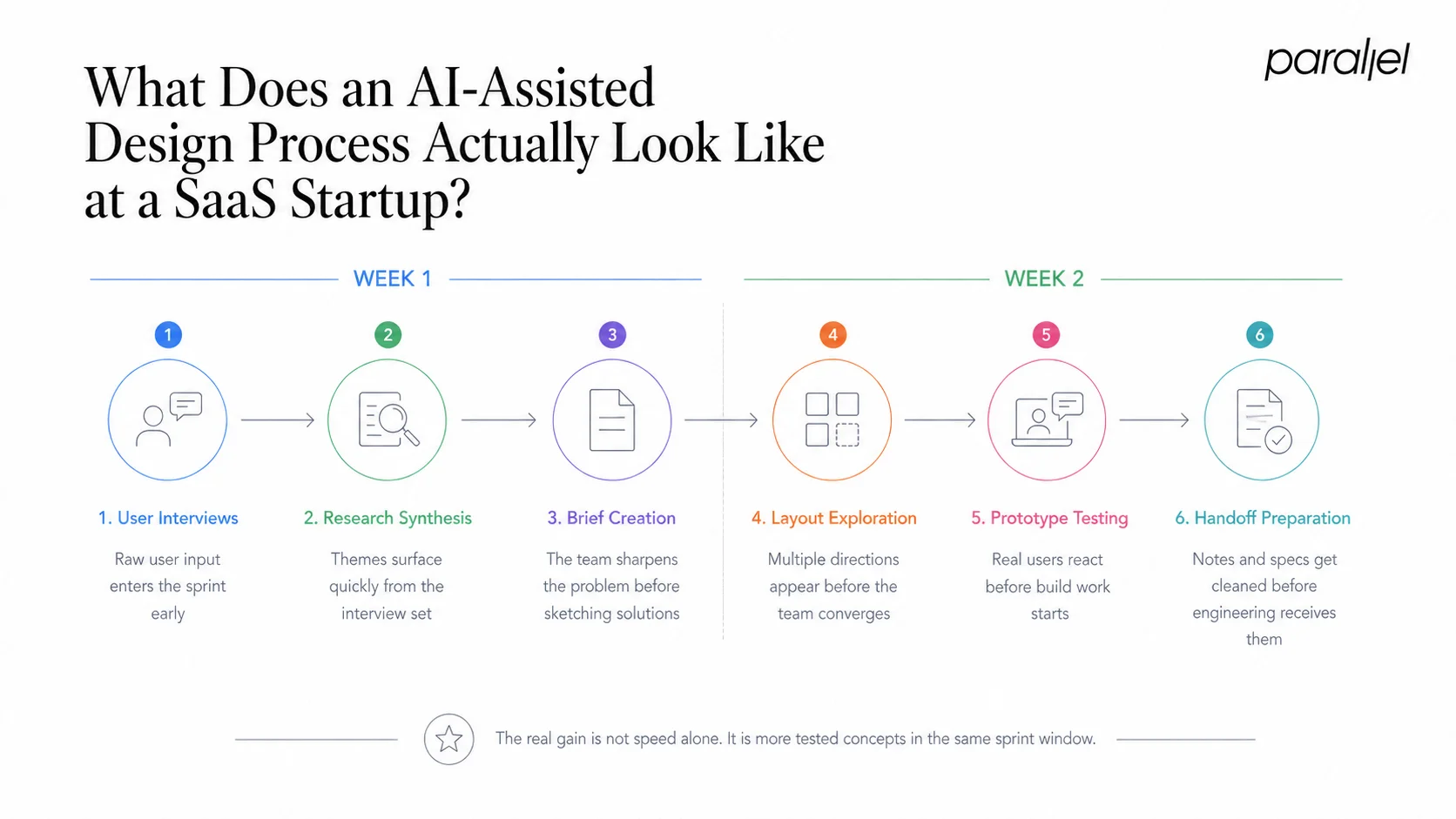

What Does an AI-Assisted Design Process Actually Look Like at a SaaS Startup?

Rather than describing the ideal state, let me walk through how this actually runs at an early-stage SaaS team.

A typical two-week sprint for a product team using AI well might look like this:

Week 1 — Discovery and ideation:

The team runs user interviews. Transcripts go into an AI qualitative research tool for initial synthesis — theme extraction, frequency analysis, sentiment clustering. A designer then reviews the output critically, adds contextual interpretation the model missed, and produces a tighter brief in half the usual time.

That brief feeds into Figma AI for initial layout exploration. Instead of starting from a blank canvas, the designer starts from three AI-generated frames and immediately begins judging, editing, and elevating. Designing interfaces for AI products requires the same discipline — start with intent, not prompts.

Week 2 — Prototyping and testing:

A mid-fidelity prototype is assembled using AI-generated component variants. Why design sprints work is precisely because they force constraint — and AI makes that constraint more productive, not less necessary. The prototype goes into usability testing. Usability testing questions are drafted with AI assistance, reviewed by the lead designer, and refined. Findings come back within 48 hours.

The handoff document — annotations, token references, interaction notes — is partly auto-generated by Figma AI and reviewed before engineering ingestion.

The result: a two-week sprint that previously yielded one tested concept now regularly yields two or three, with equivalent quality. That is the actual compounding value of AI in design for a startup operating under time and resource constraints.

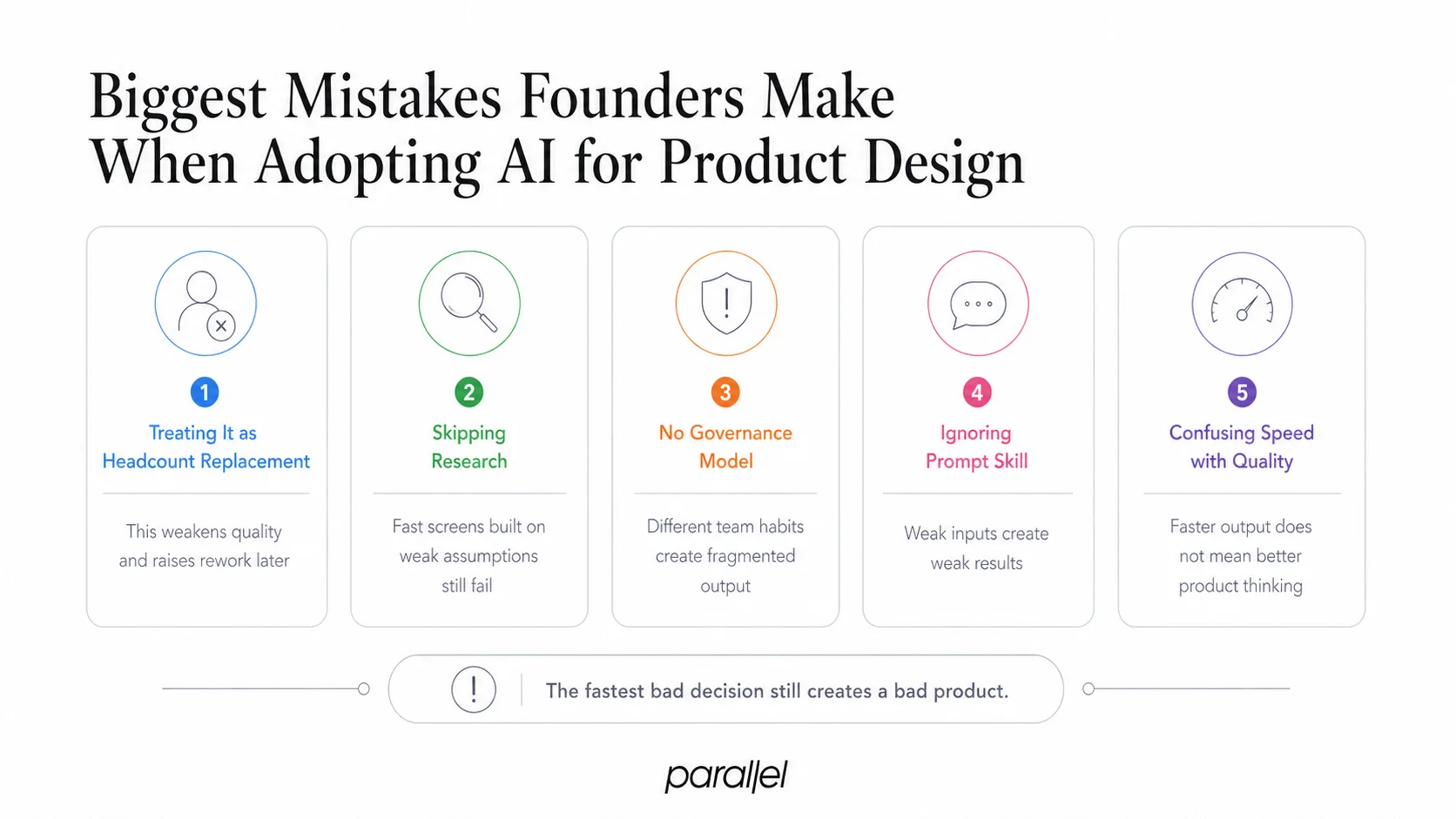

Biggest Mistakes Founders Make When Adopting AI for Product Design

I've seen these patterns consistently across early-stage companies.

- Treating AI as a headcount replacement: The logic sounds financially clean — one AI tool, one fewer designer. In practice, it produces lower-quality output, higher rework costs, and a product that looks like it was designed by a committee of averages. AI amplifies skilled designers. It does not substitute for them.

- Skipping the research phase: Founders under pressure compress discovery. AI makes this temptation worse — it can generate screens so quickly that teams skip the step of understanding what users actually need. Beautiful AI-generated UI built on unvalidated assumptions still fails.

- Adopting without a governance model: If everyone on the team is using AI tools differently, with no shared standards for review or output quality, you get design fragmentation at speed. Establish clear acceptance criteria for AI-assisted outputs before you scale their use.

- Ignoring prompt literacy: A designer who can write precise, constraint-rich prompts gets dramatically better outputs than one who doesn't. This skill is teachable — and currently undertaught.

- Conflating speed with quality: AI can produce a screen in seconds. That screen being the right screen for your user — tested, validated, grounded in research — still requires the full discipline of product strategy. Speed is only valuable when it's pointed at the right problem.

AI Tools for UI Design: Comparison and How to Use AI to Speed Up Design Sprints in 2026

Speeding up design sprints with AI is not about using every tool available — it's about removing specific friction points in the sprint structure.

The highest-leverage interventions, in order of impact:

- AI research synthesis before sprint kick-off: Feed existing user data, session recordings, or interview transcripts through AI survey analysis or qualitative tools to surface themes in advance. This makes the sprint's problem definition sharper from day one.

- Prompt-driven ideation in the diverge phase: Use Figma AI or Framer AI to generate 6–8 layout concepts from a brief. Designers vote and converge faster when they're critiquing existing options rather than generating from scratch.

- AI copy and content generation: UX copy is a notorious sprint bottleneck. Use AI to generate placeholder copy grounded in UX writing best practices, then edit to voice and context — never publish AI copy without a human pass.

- Auto-annotation for handoff: Figma AI and similar tools can annotate components, reducing the handoff prep step from a half-day task to under an hour.

- Post-sprint retrospective synthesis: Use AI to process retrospective notes and surface recurring friction patterns across multiple sprints.

For a comparison of tools specifically suited to sprint contexts:

Thematic AI tools in particular have become a genuine competitive advantage for teams running lean research operations — they surface signals from qualitative data at a speed that was previously impossible without a full-time researcher.

Conclusion

AI in design is not a trend to watch — it's an operational reality that's already separating high-velocity product teams from the rest.

- AI accelerates ideation, synthesis, and handoff — but human judgment remains the quality gate.

- The biggest adoption failures come from skipping governance, not from choosing the wrong tools.

- For startups, the real advantage is iteration speed: more tested concepts per sprint, less time between insight and prototype.

- The future of design with AI belongs to teams who treat it as infrastructure — not inspiration.

Build with intent. Let AI handle the repetitive. Keep thinking at the centre.

Frequently Asked Questions

Q1: What is AI in design, in plain terms?

AI in design means using machine learning and generative models to assist with tasks across the design process — from synthesising user research and generating layout concepts to automating component annotation and flagging accessibility issues. It spans tools like Figma AI, Adobe Sensei, and Midjourney.

Q2: Does AI replace UX designers?

No. AI removes repetitive execution work, which frees designers to focus on research, strategic thinking, and judgment. The real impact of AI on designers is a shift in the work, not an elimination of it. Teams still need humans to interpret data, empathise with users, and make decisions that require context.

Q3: What's the best AI design tool for an early-stage startup?

Figma AI is the most practical entry point for product design work — it sits inside your existing workflow and doesn't require a new toolchain. For visual exploration, Midjourney or DALL-E. For research synthesis on a lean team, a dedicated qualitative AI tool like Dovetail.

Q4: How does AI help with accessibility compliance?

Tools like Adobe Sensei and Figma's accessibility plugins can surface contrast failures, missing alt text, and structural issues against W3C guidelines before designs reach engineering. AI flags these consistently and early — but designers still need to understand and apply the underlying principles.

Q5: How long does it take to integrate AI into an existing design workflow?

A single high-friction task — like research synthesis or copy generation — can be integrated in under two weeks. A full workflow transformation typically takes one to two quarters, including the time needed to build prompt literacy across the team and establish review governance.

Q6: What ethical risks should product leaders know about?

The primary risks are bias in training data producing outputs that misrepresent or exclude user groups, over-reliance on AI-generated patterns that flatten originality, and teams skipping research because AI can generate screens quickly. Reviewing ethical considerations in AI design before scaling adoption is not optional — it's due diligence.

.avif)